Inspired by a recent thread “AI doesn’t have to replace talent” (AI doesnt have to replace talent), I have started to look into AI tools a bit more to see how I could make use of it myself.

I began with AI Render, but found that unless you use your own ComfyUI setup, it a bit limited because nwer and better models are released all the time. It was still fun to play a bit with the image generation but it was also hard to control.

I’m not after realism but I want to continue with the style that I developed for my recent films. It is sort of semi-realism, similar to what can be found in some graphic novels.

So I am looking to generate animation clips in my prefered style. You can use a text only model, which gives you little control, or you can use image-to-video with the image representing the first frame. That image has to represent the look of the clip.

Because I still wanted iClone somewhere in the pipeline, I created a project of the scene I wanted. I then rendered one frame out as image and then had AI convert the image into the style I was after, which needs to be defined by a prompt.

For both image and video generation there are many models to choose from. You look for visual quality, but also how well it understands the prompt. Part of that depends on how acomplished you are in writing that prompt, as I found out.

It helps to have a platform where you can easily try out different models. I use freepik (https://www.freepik.com/) to which I was already subscribed for its images and videos.

For images I ended up using Nano Banana Pro, with the prompt: Change to semi-realistic 3D cartoon style with smooth lines and colors. Before that I used Adobe Firefly, until it rejected one of my images (the one shown below, which has nothing objectionable in it).

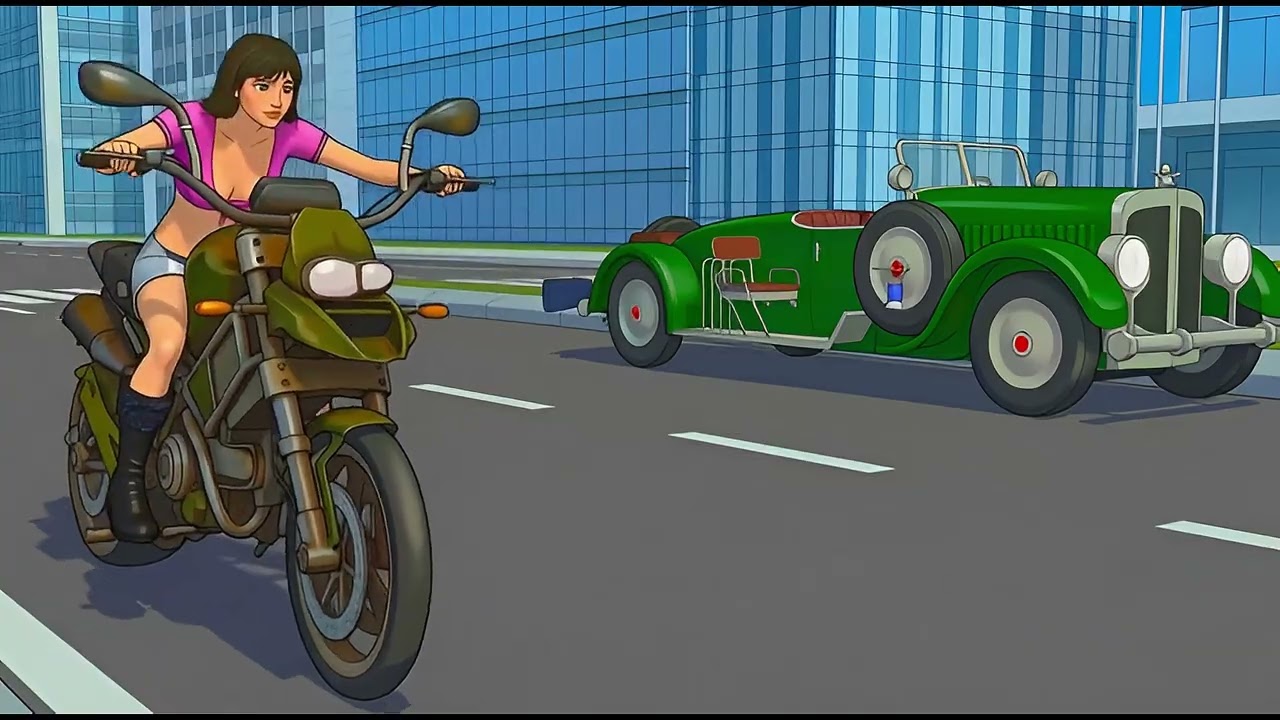

Anyway, enough talk. Here is an example with Nano Banana:

iClone render:

Cartoon version:

For image-to-video I use Seedream 1.0. Here is a short clip (no audio):

The prompt for this one is:

Young woman with serious expression sits at a bar and is holding a glass with red wine.

Outside, cars are driving by on the street in both directions.

A man stands on the left, watching the woman.

After a few moments, the man walks up to the woman.

Meanwhile, the woman brings the rim of the glass to her lips, tilts the glass so the wine touches her lips and then takes a sip from the wine.

She then puts the glass back on the bar surface and smiles with satisfaction.

She then looks at the man.

I had to be quite eleborate in the description of the woman taking a sip of wine, as just stating “takes a sip of wine” was not enough.

So prompt writing is really a skill that needs to be developed and comes with its own challenges but also requires creativity…

To be continued…